HPC Cloud Engineer

Role details

Job location

Tech stack

Job description

This role is based in Barcelona, with an on-site commitment of three days a week. Fluency in English is required.

Are you ready to scale cloud high performance computing that speeds life-changing medicines to patients? Could you design resilient AWS-based HPC services that scientists and engineers trust every day to model, simulate and decide faster? This role sits at the heart of our mission to reduce time from idea to impact by giving colleagues fast, reliable and secure compute at the moment they need it.

You will join a supportive team of equals that values ownership, curiosity and practical problem solving. We bring diverse expertise together to create new answers, pairing deep engineering with a disciplined, outcome-focused mindset. If you like building robust platforms, measuring what matters and turning complexity into clarity, you will thrive here.

Accountabilities

- Develop, deliver and operate high performance computing clusters and applications on AWS.

- Take a Site Reliability Engineering approach to HPC services, managing the development deployment, monitoring and incident response end-to-end.

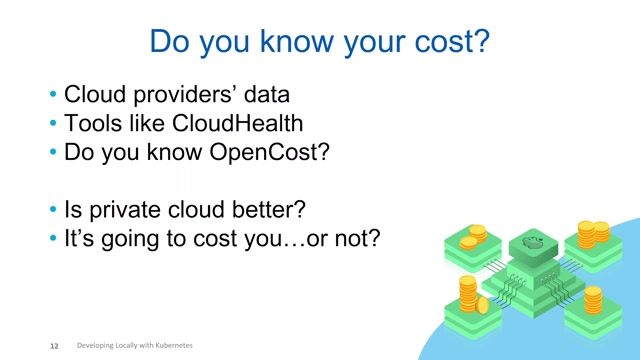

- Constantly monitoring cloud spend to ensure efficiency and cost-effectiveness.

- Keep the cloud HPC infrastructure updated and aligned with AstraZeneca´s security standards.

- Solve complex technical problems, both with SCP services and the users´ use of them., Here, cloud engineering meets cutting-edge science with real-world stakes. You will work with people who combine discipline and imagination, using digital technologies to turn complex pipelines into delivered medicines. We value kindness alongside ambition, and we back bold ideas with the support needed to make them real-putting diverse minds together to spark better solutions. Your contribution will be visible: more reliable compute, lower costs, faster iteration, and ultimately, better outcomes for patients and society.

Requirements

Experience in development, deployment automation and management of large scale infrastructure on AWS, especially autoscaling HPC clusters.

- Strong understanding of cloud best practices, such as the Well Architected Framework.

- Experienced administering or programming in a Linux environment

- Terraform infrastructure-as-code, Python programming and bash scripting.

- Strong communicator; able to explain IT technical concepts in a manner which non-IT experts can understand.

- Hands-on experience working in a DevOps team and using agile methodologies.

- Experience helping users migrate HPC workloads from on-prem clusters to a cloud environment.

Desirable Skills/Experience:

- Ideally, you will be an AWS Certified Solution Architect.

- You will have a scientific degree, and/or experience in computationally intensive analysis of scientific data.

- Previous experience in high performance computing (HPC) environments, especially at large scale (>10,000 cores).

Plus some of the following areas of expertise:

- Hands-on knowledge of a range of scientific and HPC applications such as simulation software, bioinformatics tools or 3D data visualisation packages.

- Experience administering and optimising SLURM.

- Experience with software distribution frameworks such as Easybuild or Spack.

- Expertise in GPU, AI/ML tools and frameworks (CUDA, TensorFlow, PyTorch).

- Understanding of parallel programming techniques (e.g. MPI, pthreads, OpenMP) and code profiling/optimisation.

- Experience with workflow engines (e.g. Apache Airflow, Nextflow, Cromwell, AWS StepFunctions).

- Familiarity with container runtimes such as Docker, Singularity or enroot.

- Knowledge in a specific research computing domain, such as deep learning, medical imaging, molecular dynamics or ´omics.

- Experience with frameworks for regression tests and benchmarks for HPC applications, like Reframe HPC.

When we put unexpected teams in the same room, we unleash bold thinking with the power to inspire life-changing medicines. In-person working gives us the platform we need to connect, work at pace and challenge perceptions. That´s why we work, on average, a minimum of three days per week from the office. But that doesn´t mean we´re not flexible. We balance the expectation of being in the office while respecting individual flexibility. Join us in our unique and ambitious world.