Kafka Developer

Role details

Job location

Tech stack

Job description

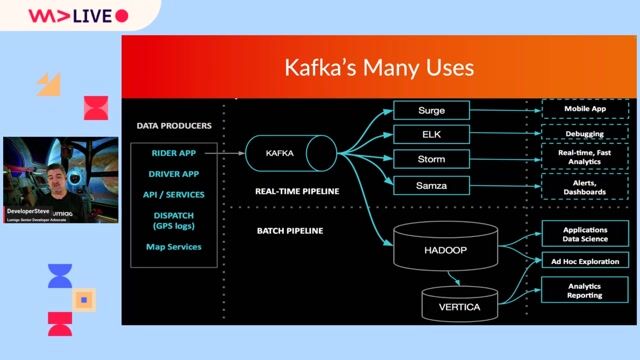

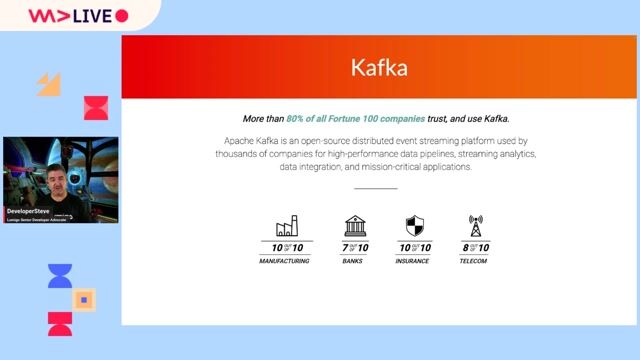

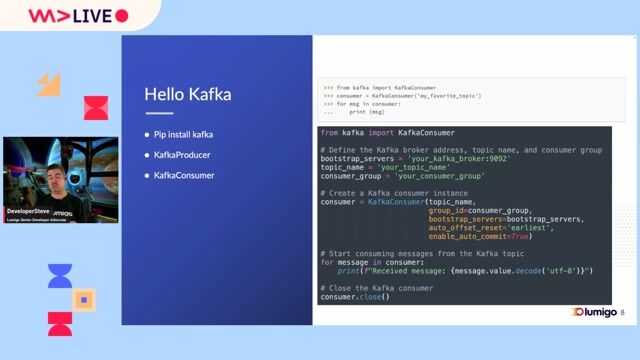

Pipeline Development: Take ownership of the CDC ingestion framework utilizing Kafka connectors (Debezium, Iceberg sink, S3 sink). Containerized Infrastructure Management: Deploy and manage Debezium and Kafka Connect workers using Docker containers orchestrating on AWS ECS (Elastic Container Service) and ECR. Data Lake Integration: Manage data ingestion into AWS S3, utilizing Parquet and Apache Iceberg formats. Infrastructure as Code: Use Terraform to provision and manage AWS resources supporting the data platform. CI/CD: Build and maintain deployment pipelines using GitHub and GitHub Actions. Operational Excellence: Monitor pipeline health, troubleshoot connectivity issues, and ensure the reliability of the Kafka ecosystem. Optional: Support and optimize workflow orchestration using Airflow where applicable., Streaming: Apache Kafka, Kafka Connect, Debezium Compute/Containerization: AWS ECS, AWS ECR, Docker Storage/Format: AWS S3, Apache Iceberg, Parquet DevOps: Terraform, GitHub Actions Languages: Python, Bash Optional Orchestration: Apache Airflow

Requirements

Apache Kafka & Kafka Connect: Multiple years of hands-on experience configuring, deploying, and managing Kafka Connect clusters in a production environment. Containerization: Extensive experience with Docker is required. You must be comfortable building images and managing container lifecycles. AWS Compute: Proven experience running containers on AWS ECS and managing images via AWS ECR.